The thanatorium is a speculative service for assisted suicide in Soylent Green. Suicide and death are not easy topics and I will do my best to address them seriously. Let me first take a moment to direct anyone who is considering or dealing with suicide to please stop reading this and talk to someone about it. I am unqualified to address—and this blog is not the place to work through—such issues.

There are four experiences to look at in the interface and service design of the Thanatorium: The patient, their beneficiaries, the usher to the beneficiaries, and the attendants to the patient. This post is about the patient themselves. Since there aren’t any technological interfaces, this will be a review of the service design from the patient’s and Soylent’s perspectives. If you’re only into this blog for technological interfaces, this is a post to skip, as it’s going to be about set design, lighting, props, signage, and ritual design, among other things.

Sol’s goals

Part of how we measure the efficacy of an experience is by checking whether it helps its user achieve their goals in the ways they would like them achieved. So let’s say that Sol’s goals are to take advantage of the service to have a good death, i.e. to pass painlessly and with dignity, and to have his belongings passed along according to his wishes. He wants psychological comfort as well, which in this case means helping him psychologically transition from the world he is leaving behind by setting up a liminal space for the ceremony, pointing toward notions of eternity and away from the horrible world he is leaving.

We are going to completely bypass the script question here about why Sol doesn’t bother to communicate to Thorn the Dark Secret in his goodbye note, but then does tell him when he happens to join him at the Thanatorium. That is what it is.

Sol’s experience

After Sol learns that his options are cannibalism or starvation, he makes the decision to die with dignity. To enact this wish, he dresses in his Sunday best, heads to the state-sponsored Thanatorium, officed in a low-rise building at the end of a wide street in downtown New York City.

Authors Islam Abohela and Noel Lavin insightfully note in their 2020 paper, The Height of Future Architecture: Significance of High versus Low Rise Architecture in Science Fiction Films, that the horizontality of this building contrasts earlier, vertical sci-fi visions of the cityscape as lofty and aspirational. In short, the building is in a horizontal repose suitable to its purpose. Further, the bright illumination spilling out from its frosted-glass doors onto the street helps to sell its next-world-ly promise, especially as the terminus of a dark road.

Initial greeting

At Sol’s approach a young worker opens the door and welcomes him. (How did she know of his approach, given the frosted glass? Let’s presume cameras, though we see no hint of this.)

With the door open, Sol feels the air conditioning pouring from inside and says, “It feels good.” She replies, “Yes, sir. Won’t you please come in?” He hesitates a moment with the gravity of it, but proceeds. Inside he walks through a turnstile and the greeter escorts him to one of the intake queues.

Worldbuilding question: The New York City of Soylent Green is oppressively hot and overcrowded. You would imagine that people would want to feel that refreshing cool air themselves, even if they weren’t there to suicide. I would expect people to be laying on the sidewalk there near the doors on the off-chance to feel a cool breeze. But the street leading to the Thanatorium is vacant. Why is this so? You might think well, it’s an authoritarian state, and curfew is probably enforced brutally. But then why is Sol allowed to just amble his way there? It would have been a nice beat to have seen Sol approached by an angry cop and challenged, only to have Sol point up the street to the Thanatorium, to which the cop softens and nods, allowing Sol to continue. This would have signaled that, despite curfew, the Thanatorium is open 24 hours a day, 7 days for “business.”

Intake

Taking a moment to appreciate the set design, the placid blues and non-descript “plop art” backdrops sell this space as a hospital rather than, say, an airport terminal, or church. It could have gone all “heavenly gate” but that would have been too soon in the patient experience, and lacked the personalized immersion that leads to…uh…the ecstasy meat (a gross, backworlded concept introduced in the beneficiaries post). The service keeps its powder dry to maximize that main event and thereby its output. So this design wins for being both familiar to the patients and effective for Soylent.

The film cuts away to show Thorn returning home to find Sol’s goodbye letter, and then running to the Thanatorium. When we cut back to Sol, he is in the middle of answering some questions by the intake staff, i.e. His favorite color and genre of music. Sol responds and the intake personnel marks his answers on a reusable plastic form. Before signing, Sol wants to confirm that the ceremony will last, “A full 20 minutes?”

This scriptwriting moment bears a mention. This comes across as a negotiation, but what is being exchanged here? And what could Sol do with a guarantee when he won’t be there in case this mustache reneges on the deal? Nothing, of course, but it really sets up the transactional nature here. One’s death is so cheap in the world of Soylent Green that one can use it as a bargaining chip. Dark.

There’s a lot that we don’t get to examine in this intake experience because the scene is cut, but per Sol’s goals identified above, we have to imagine it would include questions about his beneficiaries and privacy. Additional questions appear in the text below.

Theater 11

The usher comes and retrieves Sol, making small talk and escorting him down halls, past the beneficiaries’ observation room, to “theater 11,” which is the death chamber to which he’s been assigned, with attendants waiting there standing aside a bed in the center of the room. The inclusion of “11” reminds us that there are many such theaters in the Thanatorium. It would have been nice for the beneficiaries only room to have had a similar number, i.e. “Observation 11: beneficiaries only,” linking the two together for the users and the audience.

We’ll get back to Sol’s experience in a moment, but first a note on the floor markings and the architecture.

I first thought the red line on the floor might have been wayfinding lines like you see in some hospitals. If it was a particularly busy day, and the patient ambulatory, the intake personnel could say, “Follow the red line on the floor to theater 11.” But, a glance at the scenes that precede this show that these markings are only present in the antechamber leading into the theater and the theater itself. So it serves as more of a decoration, a red line leading to a red circle in the middle of which is a white gray, and black circle. The end of the line in two senses.

This sense of the terminus is reinforced by the design of the room. The small passageway down which Sol walks joins with the more expansive theater, creating a sort of “reverse womb” implying a balance between the beginning and end of life. It’s not critical that patients pick up on any of this, of course, but all contributes to a sense of liminality; of interest to both Sol and Soylent.

So all good, but I wish the lighting here had echoed the approach to the building. It should have been a glowing pool of light at the end of a dark passageway, rather than the even overhead lighting reminiscent of a school cafeteria that we see in the film. Pools of light in the center combined with many flickering pinpoints of light at the periphery would have increased the sense of other-worldliness and unified the approach to the building with the entrance to the theater, creating a rhythm of self-similar spectacle. It also would have let the scale of the 180° screen become apparent only once the ceremony started, adding to its thrill and overwhelming scale.

The attendant behavior

In service design, the behavior of the frontstage staff is of particular concern, as humans are good at reading other humans for cues about unfamiliar things. In this case, the attendants are silent, wear beatific expressions, and move with a dance-like deliberateness throughout their parts. It is perhaps the most effective cue-of-transition for the patient. The outfits are a little goofy, but borrow semantically from western Christian liturgy, so are kind-of appropriate. If the patient were atheist or from a different religious tradition, other costumes with different signifiers would be more appropriate.

It’s also of note that not everyone is comfortable with being touched by strangers. It signals a warmth in the scene, but might feel threatening to some patients. Another question to add to the intake questionnaire.

Disrobing

Once Sol is in the theater, the attendants greet him with silent handshakes, lead him to the bed, and begin to help him disrobe. This segment bears many questions.

Why does he need to be naked?

I get why he is disrobed here, from Soylent’s perspective. I’ve never been a mortician, but it does seem that getting the clothes off of a living person would be easier than getting it off a dead person, why make the task harder for Soylent employees down the line? Just work it into the ceremony, some product manager says. And from Sol’s perspective, he’d like to see his clothes being taken away in a nice basket with some assurances that the clothes would be washed and given back to the community; an additional assurance that he’s doing a good, selfless thing in this world with dwindling resources.

But then there are the pants. Maybe it’s me, but there is not a dignified way to remove one’s pants around other, clothed, people. Did they help him out of his pants? Did he do that and just hand the clothes to them? Is he just in his underwear? All of it seems awkward.

I think the service could take a privacy clue from hospitals, public pools, and spas: provide a small room where a patient can undress themselves and switch into a robe. This would also be an opportunity to get a shower, which the movie demonstrates is a cherished luxury in the world of Soylent Green, another reward to lure citizens. Water is in short supply in the world of Soylent Green, but the corpses that are sent en masse to The Exchange for processing don’t get otherwise cleaned, so it would be another nice, hygienic worldbuilding hint.

In the scene, the disrobing is taken as a solemn moment, but Sol is distracted from thinking too hard about it by the appearance of an orange floodlight.

That orange floodlight

During the disrobing, a floodlight of Sol’s favorite color illuminates. I complained briefly about this in the prior post, but what’s causing this light to come on? The usher is back at intake, so it’s not him. Maybe the light is on a timer, but that seems hard for the attendants to manage against the other things that need to happen.

Also, why does it come on at this moment in the ceremony? It might be a deliberate distraction for Sol, meant to focus his attention on the meaning of the ceremony rather than the mundane disrobing, but if so, you might think that the light should illuminate before the disrobing begins. But recall that it’s only happenstance that Sol’s favorite color is the warm and flattering orange. If a patient’s favorite color happened to be blue—which is the most popular color around the world—it would grant everything in theater 11 a cool, detached appearance, and give the patient’s own skin a deathly pallor. Not great for the experience.

Much better would be to keep the custom-color flood light off until the overture begins—when the patient’s attention is not drawn to themselves but focused on the chamber around them—and illuminate it with the rise of the music, in response to the usher’s controls. This would maximize the impact of the color on Sol’s emotional state while not making his own skin and the attendants look off-putting.

Getting onto the bed

Once disrobed, the attendants help Sol onto the bed. How they do this is left off-screen, but it’s a non-trivial problem since as you can see in the screen shot, Sol is 5’7″ and the bed height is well above his waist. Hopefully there’s a set of retractable steps under the bed skirt that can make this accessible to Sol without his having to be hoisted up by the attendants, which would be undignified.

Hemlock

Once in bed, the attendants provide the “hemlock,” (which is what I’m calling the deadly draught they provide in homage to the death of Socrates) and Sol drinks.

We don’t see the glass in the room prior to its being handed to him, but I imagine since this is the point of no return, it bears some attention. Should it be waiting already poured, or should he watch it being poured? Should be pour it himself? If poured, should it be from a gold, porcelain, or glass pitcher? Should there be a tray? Where should all this be staged?

For materials, gold is a good funereal symbol for never tarnishing, but might be too tempting a theft target for poverty-stricken citizens. Stoneware has a nice connotation of being of-the-earth, but is a poor choice for being opaque and here implying its contents are something to be hidden. So I’d recommend a simple glass pitcher that emphasizes clarity. The Toyo pitcher shown below has no handle and so requires two hands to operate, granting a ceremonial, human feel to the act of pouring. While we’re at it, ditch the footed highball glass for a stange or zombie glass to match the pitcher’s simplicity. Have them sitting on an end table on a tray at the side of the bed in their own pool of light and have the attendant pour and hand the glass to the patient. When they depart the chamber one attendant can take the tray out with them for cleaning, and the other can push the end table back under the bed.

Another argument for delaying the floodlight until the overture is that light can change the apparent color of the drink. It just so happens that Sol’s orange flatters the amber color of the draught, but if his favorite color had been, say, red, it might have made the drink look like a wicked ink. Keep the floodlight off to keep the apparent color of the drink something pleasant and unthreatening.

Sol makes no expression in response to the taste of the hemlock, so we have no clue how it’s flavored, but it’s in everyone’s interest that it be palatable, if not pleasant. It would have been a nice touch at intake to ask him to select from a menu of favorite flavors as well, especially to hide the taste of whatever other drugs need to be mixed in.

Once Sol has imbibed the draught, he lies back on the wedge pillow and the attendants draw a sheet up to his chest.

As the orange floodlight dims to a candlelight whisper, Sol waits for the overture to begin as the attendants depart.

Overture

Alone at last, Sol is treated to an audio overture as the drugs work through his system. The music is the principal theme from the first movement of Tchaikovsky’s Symphony No. 6, the “Pathétique.” He stares up at the ceiling, bathed in his favorite color, listening to his favorite music unaware that things are about to become even more spectacular.

Cinerama

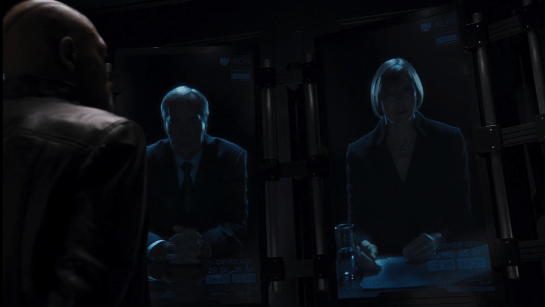

The overture complete (and, per my ecstasy meat theory, the MDMA and opiates have kicked in) the audio-visual presentation starts. The music changes to the first movement of Beethoven’s “Symphony #6 (The Pastoral),” and a very wide-angle video presentation begins on the wrap-around screen above him, starting with a verdant field of tulips blowing in a breeze.

It later transitions to images of fauna, other flora, wholesome livestock, and sunsets—all romantic scenes of a highly-selective-memory of Earth’s heyday. It’s important to remember that audiences in 1973 may have heard of a Cinerama display like this, but few of them had seen it. And the 180+° screen seen in the film dwarfs the original Cinerama 2.65:1 display ratio. So though folks today may yawn at this in comparison to IMAX or Oculus AR displays, at the time this would have seemed very sci-fi.

From our vantage point, it all seems a little cruel, bathing Sol in scenes of what he cannot have and what for him will never be, but maybe it points at an afterlife where the things you recall fondly will be yours again, in abundance. (Hey that seems like a formula for every afterlife story.) Mixed with the drugs in Sol’s system, it would help flood his mind and body with euphoria and all the pleasant neurotransmitters that entails.

At a few minutes into the presentation, the SPEAKING PERMITTED light of the beneficiaries interface begins blinking, and the patient is able to talk to their loved ones. This would interrupt the spectacle of the display, but add a flood of additional emotions (and thereby hormones) from heartfelt declarations of love and farewell. Immediately afterward “Morning Mood” from Grieg’s “Peer Gynt Suite #1” plays as biophilic videos play: Alpine mountainscapes with grazing donkeys, tarns with floral banks. Finally it segues to scenes depicting the end-of-a-day: A sunset over waves crashing on the black rocks of a pristine West Coast beach, another sun sets through gaps in swiftly drifting clouds.

The screen fades to black as “Aase’s Death” plays from the “Peer Gynt Suite.” In the film, this is the point where Sol shares the Dark Secret and tells Thorn he must go the Exchange and provide proof to the elders. (Ugh. Screenwriters, again, if this was so important, why did he wait until this moment—which he was not sure would come—to convey this information? It makes no sense. But I digress.)

The camera is all close up in their faces for this final beat, so we don’t know what is playing on the screen, but I’d like to think it’s images of stars and nebulae to evoke not just the end of a terrestrial day, but a connection to things that by comparison seem eternal, everlasting.

Communication signals

The dialogue makes me realize another signal is missing for Sol, that is, how does he know when the audio channel to the observation room is open? Now, it would be nice if the audio channel were tied to the state of the viewing portal. That is, audio is connected when the portal is open and they can see each other; and off when the portal is closed. But, we know that Soylent wants the usher to have control of the channels to silence either party at will, so in lieu of that, let’s give some signal to Sol near the observation window to let him know when the audio channel is open. It should look akin to the interface on the other side in the observation room, but it would have to be redesigned for a 10-foot rather than 2-foot experience. It would also have to not be distracting to the patient when their attention is on the cinerama, so a dim, backlit visual might be enough for sighted users. Separate and custom-designed rooms should be built for differently abled patients.

After his plea to Thorn, Sol finally passes, marking the end of his experience with the Thanatorium.

All told, Sol’s experience suits his goals fairly well. He wants a sense of dignity, spectacle, importance, connection to his loved one, and otherworldliness that he receives. There are little things to fix throughout, as mentioned in the text.

My biggest criticism is of being physically separated from loved ones, when a held hand might take the edge off of the fear of death and add a nice dose of oxytocin to the result, but Soylent’s interest is more about maximizing control of the end product, so this, full of risk, would not make it into the final design.