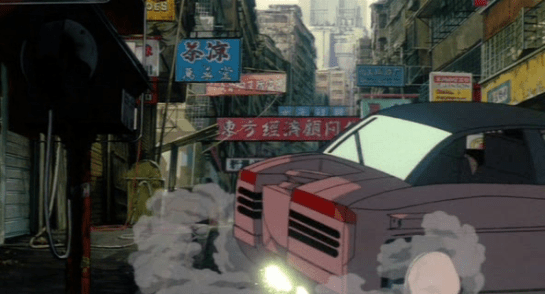

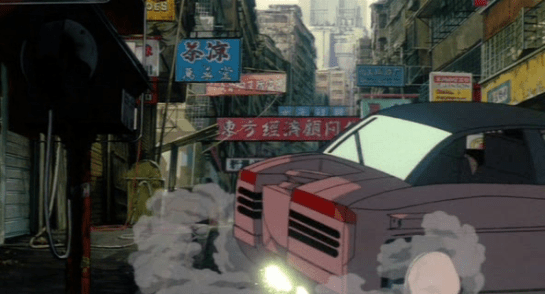

When the anonymous Section 6 operatives infiltrate and attack Section 9 to abduct what remains of the cybernetic body housing Project 2501, you’d think the last thing on their mind would be courteous driving. Yet when they are fleeing Togusa’s mighty mullet-fueled pistol rage, we see a surprisingly polite feature of their car.

Speeding along, they come to a cross-alley where they nearly run into a passing garbage truck. They slam on their brakes, and reverse the car to give the truck some room. When they’re reversing, a broad red panel on the back of the vehicle illuminates the English word “BACK.”

The signal disappears when the brake is pressed and the entire panel glows the bright red.

We see the rear end of other vehicles throughout the movie, and none even have the display surface to present such a signal. Even Batou’s ride—and he’s a badpass—lacks anything like a large display surface.

This is unique in the film to this vehicle. It seems that yes, Section 6 is not only trying to cover the tracks that lead to the artificial intelligence that they have created, but are driving the most polite getaway car ever while doing it.

To be clear: This is a bad idea

First of course, driving around in a unique vehicle goes against the whole plan of trying to get away. So, there’s that.

Secondly, why is it in English? We see a lot of signage in the movie, and it’s all Chinese (tip-o-the hat to commenter Don for helping me identify the characters), so this is another conspicuous signal. We do see broken-English labels on the virtual 3D scanner, but this “interface” English in software is not unheard of.

Lastly, it’s unsafe. In traffic accidents, split-seconds of delay can be deadly, and reading is a slower process than just seeing. The more common white (or amber in the antipodes) reversing lamps is a much more arresting, immediate, and safe signal to the drivers behind you, and so would make a much better choice.